Carinne Deeds, AYPF Policy Associate

Nine months after the passage of the Every Student Succeeds Act (ESSA), we at AYPF are still trying to fully understand the numerous provisions in the law related to the use of research evidence or evidence-based strategies. I won’t be providing a run-down of all of these provisions in this blog post,* and sadly I’m not sure I know the secret to making evidence great again. (Are election jokes getting old yet?) What I will share with you are some questions we at AYPF are asking about implementation, given what we know about the law thus far.

First, some brief context. ESSA, as many of you know, is the first federal education law to define the term “evidence-based” and to distinguish four separate tiers of evidence, based on the rigor of the research and the strength of the evidence. This characterization of research evidence is widely considered to be an improvement over the ill-defined “scientifically-based research” language under No Child Left Behind. Upon speaking with many state and national leaders, however, we’ve learned that there is still a lack of clarity and cohesion around what these evidence-related provisions will entail for states, localities, and the young people they serve. Below are a few of the questions we’ve heard from numerous states that we’re still grappling with as well.

First, some brief context. ESSA, as many of you know, is the first federal education law to define the term “evidence-based” and to distinguish four separate tiers of evidence, based on the rigor of the research and the strength of the evidence. This characterization of research evidence is widely considered to be an improvement over the ill-defined “scientifically-based research” language under No Child Left Behind. Upon speaking with many state and national leaders, however, we’ve learned that there is still a lack of clarity and cohesion around what these evidence-related provisions will entail for states, localities, and the young people they serve. Below are a few of the questions we’ve heard from numerous states that we’re still grappling with as well.

1. Is there sufficient evidence?

Are there enough studies that meet the criteria required for the top tiers of evidence? Is there a dearth of evidence on certain topics, populations, or areas (interventions in rural communities, for example)? As noted recently in Education Week, “the pool of high-quality research on education programs remains relatively small, sporadic, and focused on shorter-term gains for students.” So how can we use the limited amount of evidence we do have? And furthermore, how can we ensure that our gaps in research drive future local, state, and national research agendas?

2. What about studies with non-significant results?

What can we do with studies that fail to produce sufficient evidence, i.e., studies with null effects? What about studies with negative effects? Should we disregard the evidence from interventions that “didn’t work”? (Disclaimer: I know “didn’t work” is imprecise. I hope my statistics professor isn’t reading this.) Ultimately, how can states use all available evidence, or the lack of sufficient evidence, to inform their decision-making?

3. How can evidence be applied in different contexts?

To what extent does one proven intervention mean that that particular intervention will be effective in another city? Another state? In the words of Ash Vasudeva of the Carnegie Foundation for the Advancement of Teaching, “Just as one-size-doesn’t fit all when it comes to clothes or educational initiatives, one study doesn’t fit all district and school contexts. It’s important for all of us to remember that interventions—even those backed by high-quality evidence—are beginnings and not ends.” How can proven interventions be adapted to fit local contexts?

To what extent does one proven intervention mean that that particular intervention will be effective in another city? Another state? In the words of Ash Vasudeva of the Carnegie Foundation for the Advancement of Teaching, “Just as one-size-doesn’t fit all when it comes to clothes or educational initiatives, one study doesn’t fit all district and school contexts. It’s important for all of us to remember that interventions—even those backed by high-quality evidence—are beginnings and not ends.” How can proven interventions be adapted to fit local contexts?

The answer to many of these questions depends greatly on the extent to which states and localities are able to “move from evidence-based practice to an evidence-based system,” as Dr. Marty West posits. This means doing more than just collecting and reporting data in order to comply with a federal mandate. An evidence-based system entails using evidence and data holistically for the purpose of continuous improvement. As Dr. Vivian Tseng of the William T. Grant Foundation stated, an “ongoing cycle of learning” is necessary regardless of the type of evidence you’re using. To build an evidence-based system, data must be collected and used to address the needs of decision makers and should drive a culture of continuous improvement.

There are plenty of intricacies and nuances involved in building this type of “system” and I’m certainly not aware of all of them. One powerful tool I am familiar with is research-practice partnerships – deliberate, ongoing coalitions between researchers and practitioners within a state or a locality. To be clear, I’m not talking about partnering for the sake of partnering. What the William T. Grant Foundation, The National Network of Education Research-Practice Partnerships (NNERPP), and others around the country know to be effective are partnerships that serve as a mechanism for embedding a culture of continuous improvement within and between systems. Partnerships are by no means a silver bullet, but they can certainly facilitate the communication, coordination, and even the social networks necessary to ensure that research priorities are informed by the needs of a particular community.

There are plenty of intricacies and nuances involved in building this type of “system” and I’m certainly not aware of all of them. One powerful tool I am familiar with is research-practice partnerships – deliberate, ongoing coalitions between researchers and practitioners within a state or a locality. To be clear, I’m not talking about partnering for the sake of partnering. What the William T. Grant Foundation, The National Network of Education Research-Practice Partnerships (NNERPP), and others around the country know to be effective are partnerships that serve as a mechanism for embedding a culture of continuous improvement within and between systems. Partnerships are by no means a silver bullet, but they can certainly facilitate the communication, coordination, and even the social networks necessary to ensure that research priorities are informed by the needs of a particular community.

Above all else, we see ESSA’s evidence-related provisions as an opportunity for states and localities to prioritize the process of ongoing learning in order to better serve their unique student bodies. AYPF, along with the William T. Grant Foundation and several other partners, will be exploring the questions listed above in the months to come, along with many others. Our findings will be shared with state and local leaders, as well as those hoping to support them moving forward.

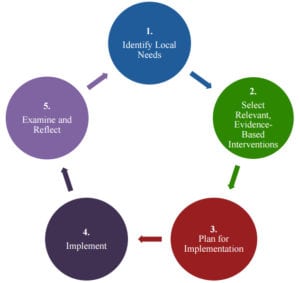

Note: New guidance issued by the Department of Education provides additional suggestions and tools for identifying, selecting, and implementing evidence-based activities, strategies, and interventions. We found this graphic to be a particularly helpful visualization of the process of continuous improvement to promote better outcomes for students.

Note: New guidance issued by the Department of Education provides additional suggestions and tools for identifying, selecting, and implementing evidence-based activities, strategies, and interventions. We found this graphic to be a particularly helpful visualization of the process of continuous improvement to promote better outcomes for students.

*For those who would like an overview of the provisions related to evidence use, I like this one from Dr. Marty West, this one from Dr. Bill Penuel, and this one from Results for America.

Carinne Deeds is a Policy Associate at the American Youth Policy Forum.